In my spare time I like to search for vulnerabilities in web applications as part of various vulnerability disclosure programs (VDPs). One program I had been working on introduced me to the SOGo webmail client. Inverse, who maintain SOGo along with a community of developers, describe it as “a fully supported and trusted groupware server with a focus on scalability and open standards”. It offers more than just webmail, but that is what we are focusing on in this article. SOGo is open source and there are some large companies and organisations that use it. One of those organisations is the one that brought the application to my attention through their VDP.

I cannot disclose the details of the program, but I did find several vulnerabilities, one of which was critical. Unfortunately the webmail client was deemed out of scope for the VDP. The issues were raised with Inverse and fixed promptly but I chose to part ways with the VDP.

Following successful remedial work by Inverse, I decided to read some of the source code, starting with the fixes that had been made, it was open source after all. No longer working on the original VDP, instead I was using an instance of SOGo managed by Gandi, a domain name registrar, hosting and email provider.

The SOGo webmail client application is written in Objective-C which I have no experience in. That aside, I found the file that had been amended as part of the fix, and started reading. It quickly became apparent that there could be further issues with similar payloads to what I had used previously.

The code I was interested in, was the HTML parser for viewing emails within the webmail client. As emails can contain JavaScript, there is a high risk for users if email content is not properly sanitised before being displayed. I had realised that the application code was effectively blacklisting certain tags and attributes. If I could find an attribute that was capable of executing JavaScript and not on the blacklist, I would be able to perform a Cross Site Scripting (XSS) attack. A common test when searching for XSS vulnerabilities is to embed the following:

<img src onerror='alert()'/>

In an unsanitised situation, the above would immediately execute the JavaScript alert(). However, in the SOGo application code, the ‘onerror’ attribute is blacklisted and the result is that the attribute and its’ contents are removed before presenting to the user, eliminating the threat. What interested me the most was that they were using a blacklisting approach. The blacklisting approach effectively says, assume everything is safe unless it is one of these things we know to be unsafe. No matter how good you think you have things covered, can you be 100% sure that you have not missed one tag or event that could bypass your security? I would say no. Instead, it is generally best practice to employ a whitelisting approach. Assume everything is unsafe unless it is one of these things we know to be safe. The chance of something getting through is hugely reduced and the confidence in the code increased.

With the knowledge of the approach being used, I began to research HTML events, learning about obscure ones that I have never used or come across before. Then it was a case of comparing these against the blacklist in the SOGo code. It didn’t take long to find ones that were not present.

And so it ‘begins‘

Writing some very limited JavaScript to start with, I identified that the “onbegin” event does not get removed or rewritten. If I send an email with the contents shown below, a user simply needs to open the email and the JavaScript payload would be executed.

<svg>

<animate attributename="x" dur="1s" onbegin="alert()">

</animate>

</svg>

Half the job was done. Now I just needed to confirm I could capture something useful and send it externally in the way an attacker would. A common approach is to send the user’s cookies to a server managed by the attacker so the session can be hijacked. However, the application correctly marks the session cookie as HTTPONLY which means that JavaScript does not have access to it. The next best thing is to have the JavaScript consume as much of the legitimate functions of the webmail client as possible and send the information out to the attacker’s server. On the instance of SOGo I was using there was no content security policy (CSP) in place so this was the next best option. More on CSP later.

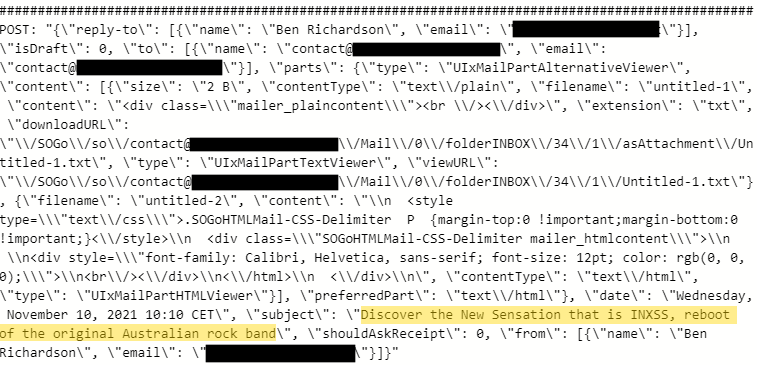

I successfully modified the script so that it could read and delete emails from the inbox, and so that it could send data out to an external server. In the image below you can see two emails in the victims inbox. One is benign and the other contains a malicious payload.

After opening the malicious email, the contents of the other email in the inbox is sent to the attacker’s server. The image below shows the content captured by the attacker (the email subject is highlighted in yellow).

With the impact of the vulnerability determined, I contacted Gandi, whose instance of SOGo I had been experimenting on. They had information at /.well-known/security.txt (a reserved address for security related information) which allowed me to contact the right people quickly. Within a few hours I had provided all of the information on the issue and a dialogue was open. Gandi agreed that they would contact Inverse to raise the vulnerability with them.

Use the Source, Luke

While Open Source code is great for many reasons. There is a downside which in this instance is all too clear. The open nature of the code meant that I was able to find the vulnerability not through mass trial and error, but instead through targeted attempts based on my understanding of what the application was doing. Arguably, this makes the code easier to attack. That is not to say that open source code is less secure. Merely, that if vulnerabilities are there, they can be easier to find than in closed software. It is also often the case that vulnerabilities are found and fixed more swiftly with open source as compared with closed source software. What is important, is if you are using any kind of third party code/software, that you evaluate it yourself and always keep it up to date. With open source, it is harder to announce security vulnerabilities without giving attackers the precise ammunition against users still on older versions. This is because the fix is there for all to see, and often the fix will reveal the attack vector.

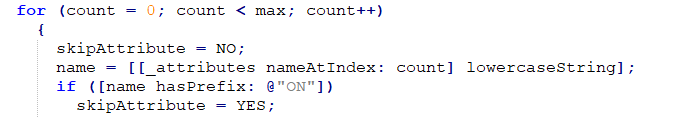

In the course of looking at this vulnerability, I saw something interesting which was likely the route cause of how this issue came to be. The HTML parser has the concept of skipping HTML attributes that it deems unsafe. As I mentioned earlier, a blacklisting approach has been taken. But on further examination, it appears that at some point a much wider blacklist was attempted, to block any attribute beginning with “on”. This would effectively prevent all kinds of eventing in which an attacker would be looking to inject their malicious JavaScript. However, one little mistake rendered the code unused. In the code block below, the “if” statement compares a lowercased string (the HTML attribute name) with an uppercase prefix. As I said, I don’t really know Objective-C, but I’m pretty sure that code is never going to evaluate to true!

As it turns out, the fix (fix #1) that SOGo implemented almost picked up on this, an almost identical “else if” has been added but this time with the correct casing, and now all attributes beginning “on” are blocked.

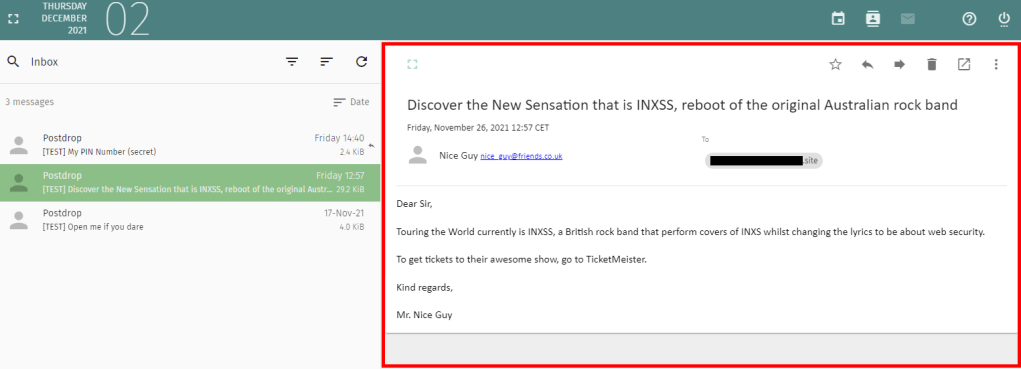

Following the implementation of the fix I further examined the code looking for other ways around the blacklist. Before too long, I found another way through. Without reading the source code, I would never have identified it. This time it did require the recipient of the email to click a link within the email, however as my demonstration video shows at the end of this article, this is easy to achieve. This time I noticed that “href” and “action” attributes were allowed to exist as long as they met a single condition, they must contain the text ‘://’. With that I quickly discovered that you can use JavaScript in the “action” attribute of a form tag. Again it was possible to send all emails out to my server, or alternatively try to steal the user’s credentials by manipulating the display of the email. As styling is not forbidden and form tags are allowed, it was possible to apply styles that would overlay the whole of the email pane with your own markup.

In the example above, everything in the red border is actually attacker supplied HTML. Specifically, the action icons have a form tag around them with JavaScript in the action. When the user clicks the bin icon, the JavaScript constructs a login screen which is injected into the body of the existing page. This results in the following experience (note the URL has not changed which gives the victim no reason to distrust the login form).

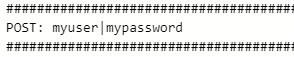

The login form acts as a keylogger and sends the victim’s username and password to the attacker’s server, then sends the user back to the inbox, none the wiser.

I updated Gandi with the information about action being vulnerable and they raised it with SOGo. Gandi, did their own due diligence as well and identified another entry point. The “formaction” attribute is one that can execute JavaScript and doesn’t begin with “on”, so was missed by the blacklisting. A further fix was applied (fix #2), shortly followed by a release (v5.3.0) on 19th November.

Unfortunately, the fix for action and formaction attributes relied on the JavaScript keyword being at the start of the value. Adding whitespace at the start (immediately after the quote marks), easily bypassed the checks and allowed the malicious payload through. I highlighted this with Inverse and they implemented a further fix (fix #3), which was released (v5.4.0) on 16th December.

Once I was happy that the XSS vulnerabilities had been fixed, I revisited the concept of styling emails to hijack areas of the screen that the victim trusts. No longer able to inject JavaScript I simply used the same approach as described earlier but pointed the form action to the attacker’s website, which would display a login screen styled as per the SOGo webmail client. This is akin to many phishing attacks where a person will send an email purporting to be from an organisation, encouraging the victim to click a link. The user would be presented with what appears to be the legitimate website but instead is used to steal their credentials. The concept here is exactly the same, but the difference which makes this more successful for the attacker, is that the victim does not need to click a link inside the email (where users will be cautious). Even savvy users would expect the webmail’s own delete button to delete the email and not be capable of sending them to another website. Below shows virtually the same experience as earlier, but with the hostname changing to the attacker’s website. The ability to hijack outside of the email body was addressed by this fix (fix #4), released in v5.5.0.

At this point it would be worth mentioning the CSP header, as touched on earlier. CSP is a way of telling the browser what resources your website is allowed to request. These days it is a very useful tool to help prevent XSS. In the examples given here, a CSP would have likely prevented the XSS vulnerabilities from being usable. At the very least it would have made it a lot harder. For example, if the CSP prevented the attacker’s server from being called, there would be no way of exfiltrating the data off of the webmail client. Yes there would still be a XSS hole, but it would be defused. That said, CSP should not be solely relied upon and systems should still be regularly tested for XSS vulnerabilities. But in instances where vulnerabilities are introduced, CSP can be a very useful safety net.

Some time later…

As is normal with vulnerability disclosure, I needed to wait a period of time before I could publish this article. During that time I occasionally dipped back into looking for further exploits. During my original investigations I became aware that if I could determine the ID of a malicious email that had been sent, I would be able to embed a malicious SVG and have it execute via an embed tag. This was because the attachments are served on the same domain and have a predictable URL (which includes the email ID, an integer). Requests to the SVG would send along the relevant auth cookie and allow script to be run under the victims context (another XSS attack). However, in the absence of being able to run any script from the email body I was at a loss to how I could predetermine the URL of the malicious attachment I was sending. But looking at the code again, I realised that I didn’t need to know the full URL, thanks to the way it handles images embedded in emails.

Within emails you can embed images in the body content in different ways. One way is using a Content-ID. This is where you attach a file as a regular attachment and then reference that attachment using a Content-ID.

<img src="cid:filename.jpg" />

The SOGo application code normally rewrites “src” attributes to “unsafe-src” to prevent them being rendered. However, there is a special clause which allows them through if the contents is a CID and that filename is attached. Suddenly, I didn’t need to know the full URL of the SVG on the server, just the filename (which the sender controls). I sent a test email with the following body content and it worked.

<embed type="image/svg+xml" src="cid:my-really-safe-file.svg"></embed>

It took a few tweaks to the payload I used in the first hack but then I was successfully sending the contents of other emails out to my external server again. All the victim had to do was open the email. I notified Inverse and a fix was applied shortly after (fix #5).

It is important to remember the threat SVGs can pose. If you allow an external actor to supply an SVG which is subsequently displayed to the user in a way that will execute internal scripts, mitigations need to be put in place. There are numerous ways of dealing with this which is beyond the scope of this article. If in doubt, block external SVGs.

During the course of my experiments, I always acted on my own Gandi mailbox and did not attempt to access the data of any other user. Both Gandi and Inverse reacted quickly when these issues were brought to their attention. I notified both companies of the intention to publish this article and allowed 90 days from reporting the last critical issue before doing so. This was to allow time to fix vulnerabilities, roll out releases and for consumers to upgrade their instances of SOGo.

For a full demonstration of the XSS vulnerabilities discussed in this article, watch the video below.

For a demonstration of stealing credentials by hijacking the email buttons, watch the second video below.

I just discovered your blog, and it is fascinating!

I wish I had this knowledge to test my own web applications, this is much needed.

Thank you for writing.

LikeLike